Try GOLD - Free

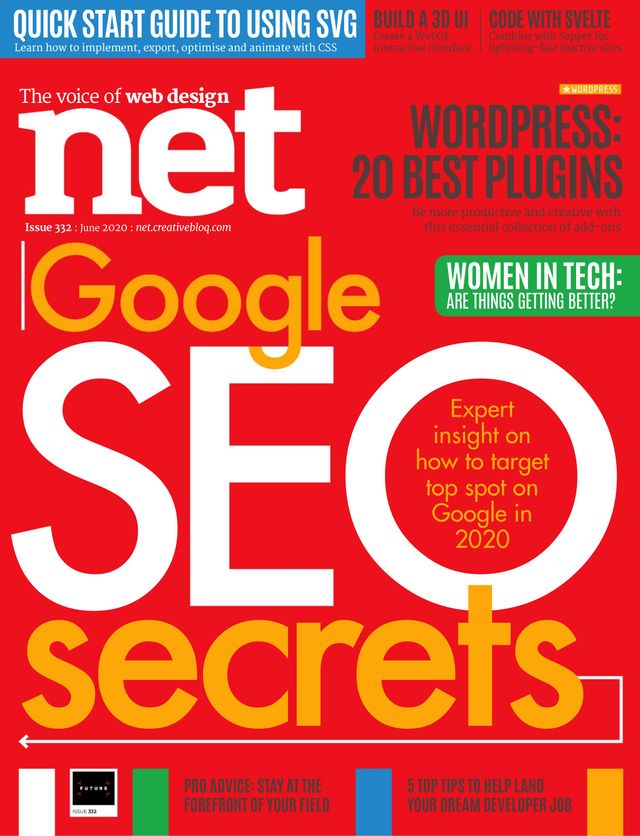

NET Magazine - June 2020

Go Unlimited with Magzter GOLD

Read NET along with 10,000+ other magazines & newspapers with just one subscription

View CatalogSubscribe only to NET

Cancel Anytime.

(No Commitments) ⓘIf you are not happy with the subscription, you can email us at help@magzter.com within 7 days of subscription start date for a full refund. No questions asked - Promise! (Note: Not applicable for single issue purchases)

Digital Subscription

Instant Access ⓘSubscribe now to instantly start reading on the Magzter website, iOS, Android, and Amazon apps.

Verified Secure

payment ⓘMagzter is a verified Stripe merchant.

In this issue

SHOWCASE YOUR DEV SKILLS

Aude Barral shares 5 top tips for landing your dream developer job.

GOOGLE SEO SECRETS

Experienced SEO consultant Carl Hendy offers up expert advice on targeting top spot in search..

NET Magazine Description:

net is the world's best-selling magazine for web designers and developers. Every issue boasts pages of tutorials covering topics such as CSS, PHP, Flash, JavaScript, HTML5 and web graphics written by many of the world’s most respected web designers and creative design agencies. Interviews, features and pro tips also offer advice on SEO, social media marketing, web hosting, the cloud, mobile development and apps, making it the essential guide for practical web design.

Recent issues

May 2020

April 2020

March 2020

February 2020

January 2020

December 2019

November 2019

October 2019

September 2019

Summer 2019

August 2019

July 2019

June 2019

May 2019

April 2019

March 2019

February 2019

January 2019

December 2018

November 2018

October 2018

September 2018

Summer 2018

August 2018

July 2018

June 2018

May 2018

April 2018

March 2018

Related Titles

T3 UK

MacFormat UK

What Hi-Fi UK

BBC Science Focus

Classic & Sports Car

What Car? UK

PC Pro

Practical Boat Owner

Stuff UK

BBC Sky at Night Magazine

Edge UK

Essential Guide to Outdoor photography

The Ultimate Guide To Android Tablets

The Photographer's Guide to Photoshop

Pocket Guide to Digital Photography

The Ultimate Guide to Graphic Design

Nokia Smartphones

The Independent Guide to the Mac

Ultimate Guide to Cloud Computing

The Ultimate Guide to Bicycle Maintenance

Linux The Complete Manual 2nd edition

The Ultimate Guide to Digital Photography

Build a better PC 2011

The Complete Guide to the iPad mini

Ultimate Guide to Microsoft Office 2010

The Complete Guide to the iPad 4

The Ultimate Guide to BlackBerry

The Ultimate Guide to Windows 8

The Complete Guide to the iPad 2

The Complete Guide to the iPad 3