Try GOLD - Free

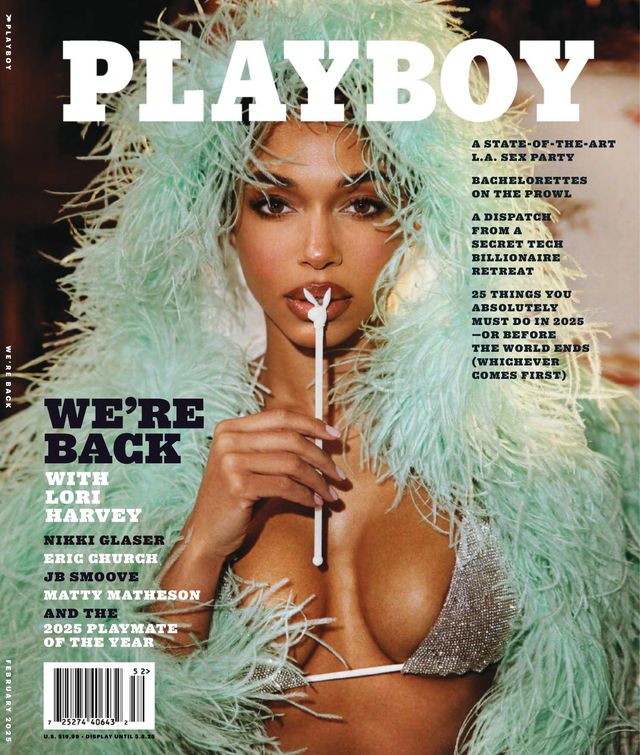

Playboy Magazine US - February 2025

Go Unlimited with Magzter GOLD

Read Playboy Magazine US along with 10,000+ other magazines & newspapers with just one subscription

View CatalogSubscribe only to Playboy Magazine US

Cancel Anytime.

(No Commitments) ⓘIf you are not happy with the subscription, you can email us at help@magzter.com within 7 days of subscription start date for a full refund. No questions asked - Promise! (Note: Not applicable for single issue purchases)

Digital Subscription

Instant Access ⓘSubscribe now to instantly start reading on the Magzter website, iOS, Android, and Amazon apps.

Verified Secure

payment ⓘMagzter is a verified Stripe merchant.

In this issue

What’s Inside:

Unparalleled Features: In the tradition of the PLAYBOY legacy, we profile top artists, performers, and influencers who are shaping 2025 and beyond.

Provocative Art: PLAYBOY's captivating photography ascends to a new realm with imagery that is bold, classic, provocative, natural, playful—and absolutely defines modern beauty.

The Lifestyle You Want: Fashion, travel, cars, and culture: We’ve curated a guide to the top-shelf lifestyle that PLAYBOY readers around the world expect.

Irreverent Heritage: As world-renowned iconoclasts, PLAYBOY has always written its own rules and shrugged off the norm. Join us for the newest draft.

Playboy Magazine US Description:

The reimagined Playboy magazine now includes a completely modern editorial and design approach, and, for the first time in its history, no longer features nudity in its pages. Playboy continues to publish sexy, seductive pictorials of the world’s most beautiful women, including its iconic Playmates, shot by some of today’s most renowned photographers. The magazine also remains committed to its award-winning mix of long-form journalism, interviews and fiction.

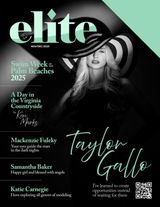

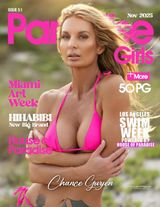

Recent issues

Special Issues

Related Titles

Maxim US

Kandy Magazine

Mancave Playbabes

Playcute

Mistress

Blow Fish

Imporium

Girl Crush

Bomber Club

FHM USA

BLACK WIDOW MAGAZINE

purple candy magazine

LINGERIE PLUS MAGAZINE

IOB (INSIDE OUT BABES) MAGAZINE

PLAYBABES SPECIAL EDITIONS

MANCAVE ELITE

Human Canvas Magazine

IMPLIED PLUS MAGAZINE

Model FORCE Magazine

Chocolate Bottoms Magazine

Backside Magazine

Casa

Morgans

Armani

SWEET 21

BIKINI PLUS

BOAST US

Fantasy Factory magazine

G Crown

Paradise Girls Magazine